| Table of Contents | ||

|---|---|---|

|

...

- In Jan. 2017, each of the 25 Condo nodes were upgraded to include two GTX 1080 GPU cards.

- In 2017, Condo Investments from RCEAS and CBE faculty added 22 nodes and 16 nVIDIA GTX 1080 GPU cards.

- In 2018, Condo Investments from RCEAS and CAS faculty added 24 nodes and 48 nVIDIA RTX 2080 TI GPU cards.

- In Mar. 2019, Condo Investments from RCEAS faculty added 1 node.

- In May-September 2020, Condo Investments from CAS, RCEAS and COH faculty added 8 nodes.

- In Spring 2022, Condo Investments from CAS and RCEAS faculty added 4 nodes.

As of MarFeb. 20212022

Processor Type | Number of Nodes | Number of CPUs | Number of GPUs | CPU Memory (GB) | GPU Memory (GB) | CPU TFLOPs | GPU TFLOPs | Annual SUs | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.3 GHz E5-2650v3 | 9 | 180 | 10 | 1152 | 80 | 5.76 | 2.57 | 1,576,800 | ||||||||

2.3 GHz E5-2670v3 | 33 | 792 | 62 | 4224 | 496 | 25.344 | 15.934 | 6,937,920 | ||||||||

2.2 GHz E5-2650v4 | 14 | 336 | 896 | 9.6768 | 2,943,360 | |||||||||||

2.6 GHz E5-2640v3 | 1 | 16 | 512 | 0.5632 | 140,160 | |||||||||||

2.3 GHz Gold 6140 | 24 | 864 | 48 | 4608 | 528 | 41.472 | 18.392 | 7,568,640 | ||||||||

| 2.6 GHz Gold 6240 | 6 | 216 | 1152 | 10.368 | 1,892,160 | |||||||||||

| 2.1 GHz Gold 6230R | 2 | 104 | 768 | 4.3264 | 911,040 | 89 | 2508 | 120 | 13312 | 1104 | 97.510 | 36.130 | 21,970,080 | |||

| 3.0 GHz Gold 6348R | 1 | 48 | 5 | 192 | 200 | 3.072 | 48.5 | 420,480 | ||||||||

3.0GHz EPYC 7302 (Coming Soon) | 3 | 96 | 24 | 768 | 1152 | 4.3008 | 28.08 | 840,960 | ||||||||

93 | 2652 | 149 | 14272 | 2456 | 104.8832 | 112.6364 | 23,231,520 |

System Configuration

| Expand | ||

|---|---|---|

| ||

|

...

| Expand | ||

|---|---|---|

| ||

|

| Expand | ||

|---|---|---|

| ||

|

| Expand | ||

|---|---|---|

| ||

|

Intel XEON processors AVX2 and AVX512 frequencies

...

Dimitrios Vavylonis, Department of Physics: 1 20-core compute node

Annual allocation: 175,200 SUs

Wonpil Im, Department of Biological Sciences:

25 24-core compute node with 2 GTX 1080 cards per node (5,256,000 SUs)

12 36-core compute nodes with 4 RTX 2080 cards per node (3,784,320 SUs)

3 32-core compute nodes with 8 A40 GPUs per node (840960 SUs)

Total Annual allocation: 9,040881,320 280 SUs

Anand Jagota, Department of Chemical Engineering: 1 24-core compute node

Annual allocation: 210,240 SUs

Brian Chen, Department of Computer Science and Engineering:

- 1 24-core compute node (210,240 SUs)

- 2 52-core compute nodes (911,040 SUs)

Annual allocation: 1,1212,280 SUs

Edmund Webb III & Alparslan Oztekin, Department of Mechanical Engineering and Mechanics: 6 24-core compute node

Annual allocation: 1,261,440 SUs

Jeetain Mittal & Srinivas Rangarajan, Department of Chemical Engineering: 13 24-core Broadwell based compute node and 16 GTX 1080 cards

Annual allocation: 2,733,120 SUs

Seth Richards-Shubik, Department of Economics

Annual allocation: 140,160 SUs

Ganesh Balasubramanian, Department of Mechanical Engineering and Mechanics: 7 36-core Skylake based compute node

Annual allocation: 2,207,520 SUs

Department of Industrial and Systems Engineering: 2 36-core Skylake based compute node

Annual allocation: 630,720 SUs

Lisa Fredin, Department of Chemistry:

2 36-core Skylake based compute node

- 4 36-core Cascade Lake based compute node

Annual allocation: 1,892,160 SUs

Paolo Bocchini, Department of Civil and Environmental Engineering: 1 24-core Broadwell based compute node

Annual Allocation: 210,240 SUs

Hannah Dailey, Department of Mechanical Engineering and Mechanics: 1 36-core Skylake based compute node

Annual allocation: 315,360 SUs

- College of Health: 2 36-core Cascade Lake based compute node

- Annual allocation: 630,720 SUs

- Keith Moored, Department of Mechanical Engineering and Mechanics: 1 48-core Cascade Lake Refresh compute node with 5 A100 GPUs

- Annual allocation: 420,480 SUs

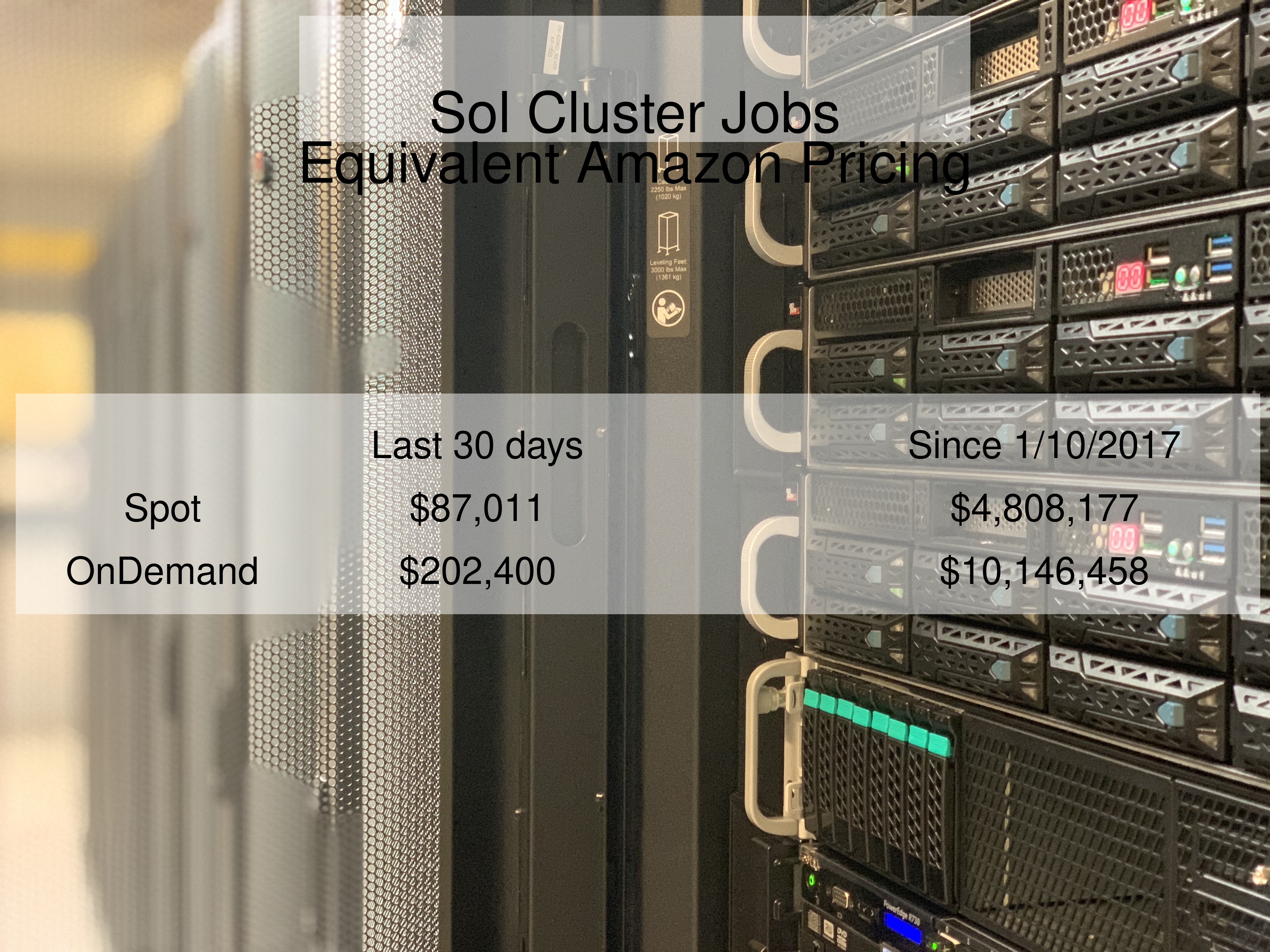

Comparison with AWS

Methods/Notes:

...

| Code Block | ||

|---|---|---|

| ||

ssh username@sol.cc.lehigh.edu |

If you are off campus, then there are two options

- Start a vpn session and then login to Sol using the ssh command above

- Use ssh gateway as a jump host first and then login to Sol using the above ssh command on the ssh gateway prompt. If your ssh is from the latest version of openssh, then you can use the following command

| Code Block | ||

|---|---|---|

| ||

ssh -J username@ssh.cc.lehigh.edu username@sol.cc.lehigh.edu |

If you are using the ssh gateway, you might want to add the following to your ${HOME}/.ssh/config file on your local systemsystem

| Code Block | ||

|---|---|---|

| ||

Host *ssh HostName ssh.cc.lehigh.edu Port 22 # This is an example - replace alp514 with your Lehigh ID User alp514 Host *sol HostName sol.cc.lehigh.edu Port 22 User <LehighID> ProxyCommand ssh ssh-W nc %h:%p %pssh |

to simplify the ssh and scp (for file transfer) command. You will be prompted for your password twice - first for ssh and then for sol

| Code Block | ||

|---|---|---|

| ||

ssh sol scp sol:<path to source directory>/filename <path to destination directory>/filename |

If you are using public key authentication, please add a passphrase to your key. Passwordless authentication is a security risk. Use ssh-agent and ssh-add to manage your public keys. See https://kb.iu.edu/d/aeww for details.

Windows users will need to install a SSH Client to access Sol. Lehigh Research Computing recommends MobaXterm since it can be configured to use the SSH Gateway as jump host. DUO Authentication is activated for faculty and staff on the SSH Gateway. If a window pops up for password enter your Lehigh password. The second pop up is for DUO, it only says DUO Login. Enter 1 for Push to DUO or 2 for call to registered phone.